Large language models (LLMs) have achieved remarkable advancements in generating human-like responses, but their credibility can be deceiving. Despite their impressive capabilities, LLMs can produce inaccurate answers, which can have severe consequences in high-stakes fields like healthcare and finance.

Researchers have been investigating uncertainty quantification methods to assess the reliability of predictions made by LLMs. A conventional approach involves submitting the same prompt multiple times to evaluate the model’s consistency. However, this method only measures self-confidence, which can be misleading even for the most advanced LLMs.

A groundbreaking new method seeks to enhance the evaluation of LLMs by detecting overconfidence and providing a more accurate assessment of their predictions. By acknowledging the limitations of current uncertainty quantification methods, researchers can develop more effective strategies for mitigating the risks associated with overconfident AI models, ultimately leading to more trustworthy and reliable AI systems.

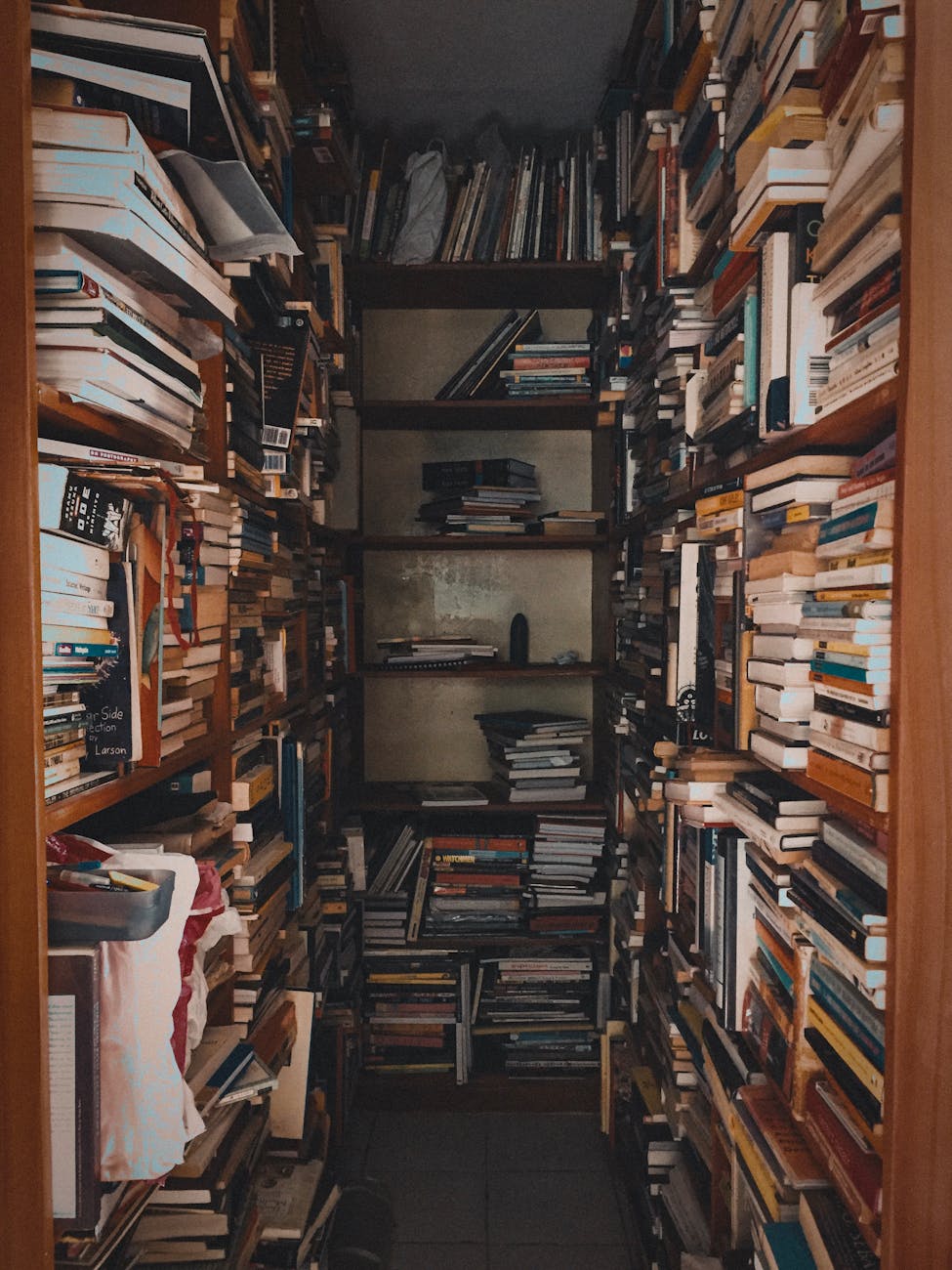

Photo by Ryan Delfin on Pexels

Photos provided by Pexels