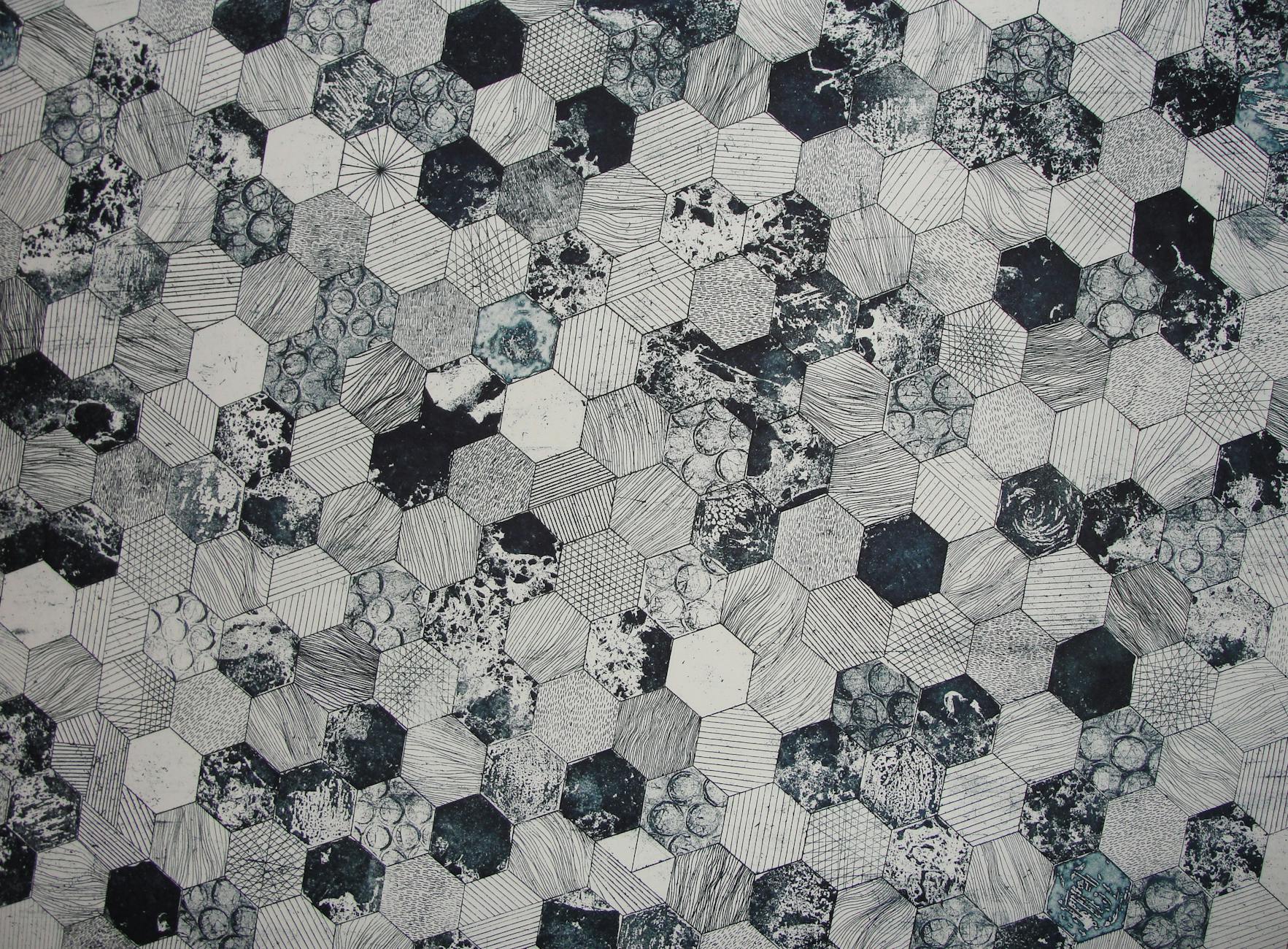

Photo by Iva Muškić on Pexels

A Reddit user, /u/Medium_Compote5665, has sparked debate with a technical breakdown of Large Language Models (LLMs). Moving beyond the hype, the user describes LLMs as sophisticated statistical systems. These systems, the user argues, excel at compressing and reconstructing linguistic patterns derived from massive datasets. Their primary function is predicting the next linguistic unit. The post contends that LLMs operate without consciousness, intuition, or volition. Instead, their impressive performance stems from the vast scale of data they’re trained on and their ability to compress human language effectively. The user emphasizes probabilistic pattern matching as the key mechanism, suggesting LLMs simulate understanding. This simulation is achieved by recognizing patterns, maintaining conversational flow, and adapting to user prompts. The original discussion can be found on Reddit: https://old.reddit.reddit.com/r/artificial/comments/1p2omg6/a_real_definition_of_an_llm_not_the/